Operating Systems: Three Easy Pieces Ch. 26

Concurrency: An introduction

Virtual CPUs → illusion of large, private virtual memory; abstraction of address space

A single running process → thread

- Multi-threaded

- State of a single thread

- Has own PC

- Context switch

- Process control block

- Multi-thread

- Thread control blocks

- Major differences between the thread and process

- Stack

- Single Thread: single stack, residing at the bottom of the address space

- Multi Thread: thread runs independently, one stack per thread

- Thread-local storage

- any stack-allocated variable

- Stack

- Reason for using thread

- Parallelism

- Executing the program with multiple processors

- The task of transforming single thread program into a program with multiple processors

- To avoid blocking program progress due to slow I/O

- Multiple I/O

- Threading enables the overlap of I/O with other activities within a single program

- Much like multiprogramming did for processes across programs

- Parallelism

- State of a single thread

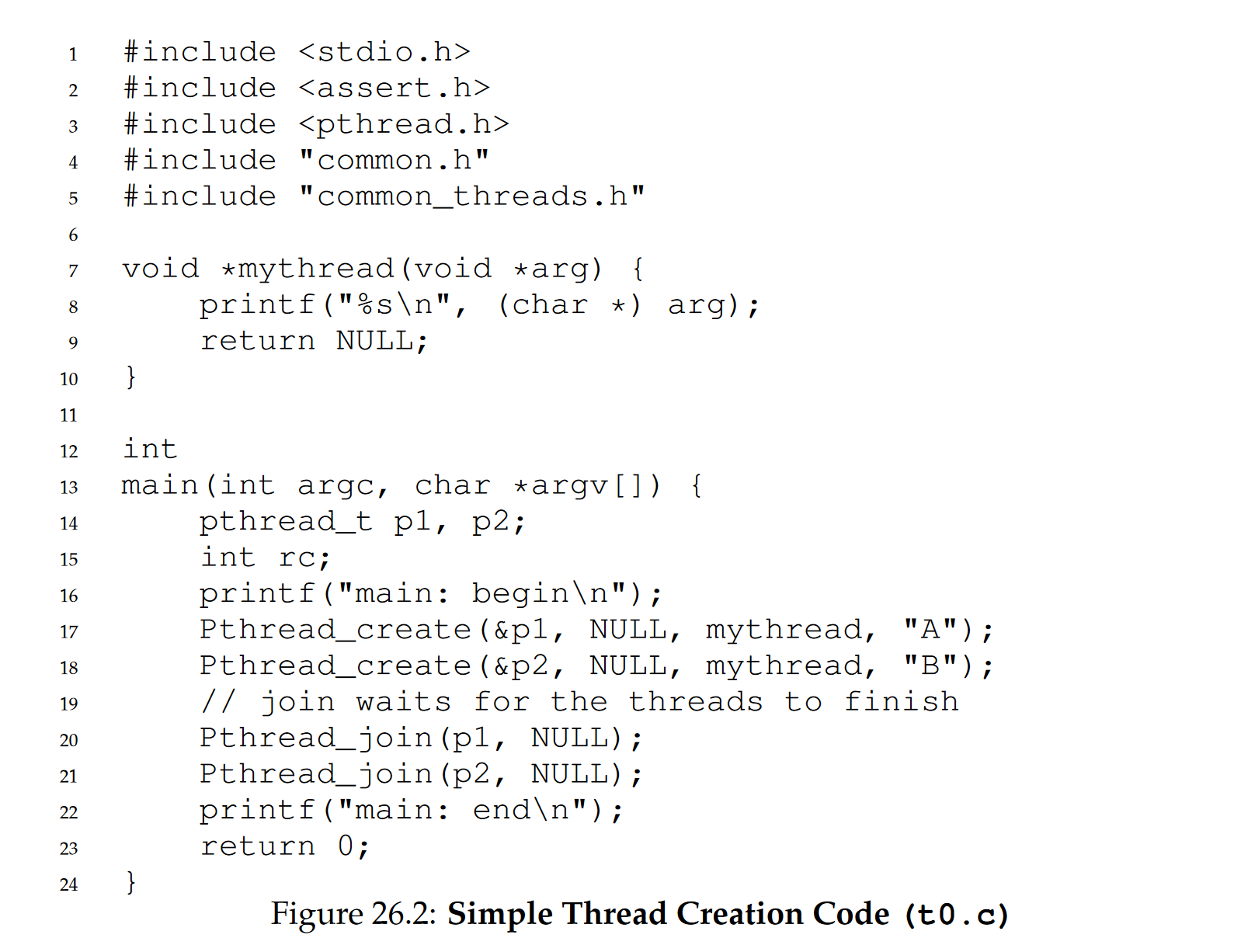

the main thread calls pthread_join(), which waits for a particular thread to complete. It does so twice, thus ensuring T1 and T2 will run and complete before finally allowing the main thread to run again

Similar to function calls, Computers are hard enough to understand without concurrency.

- Shared Data

- Let us imagine a simple example where two threads wish to update a global shared variable.

- After all, computers do not produce deterministic results

- Uncontrolled scheduling

- each thread when running has its own private registers; the registers are virtualized by the context-switch code that saves and restores them

- Non deterministic schedule

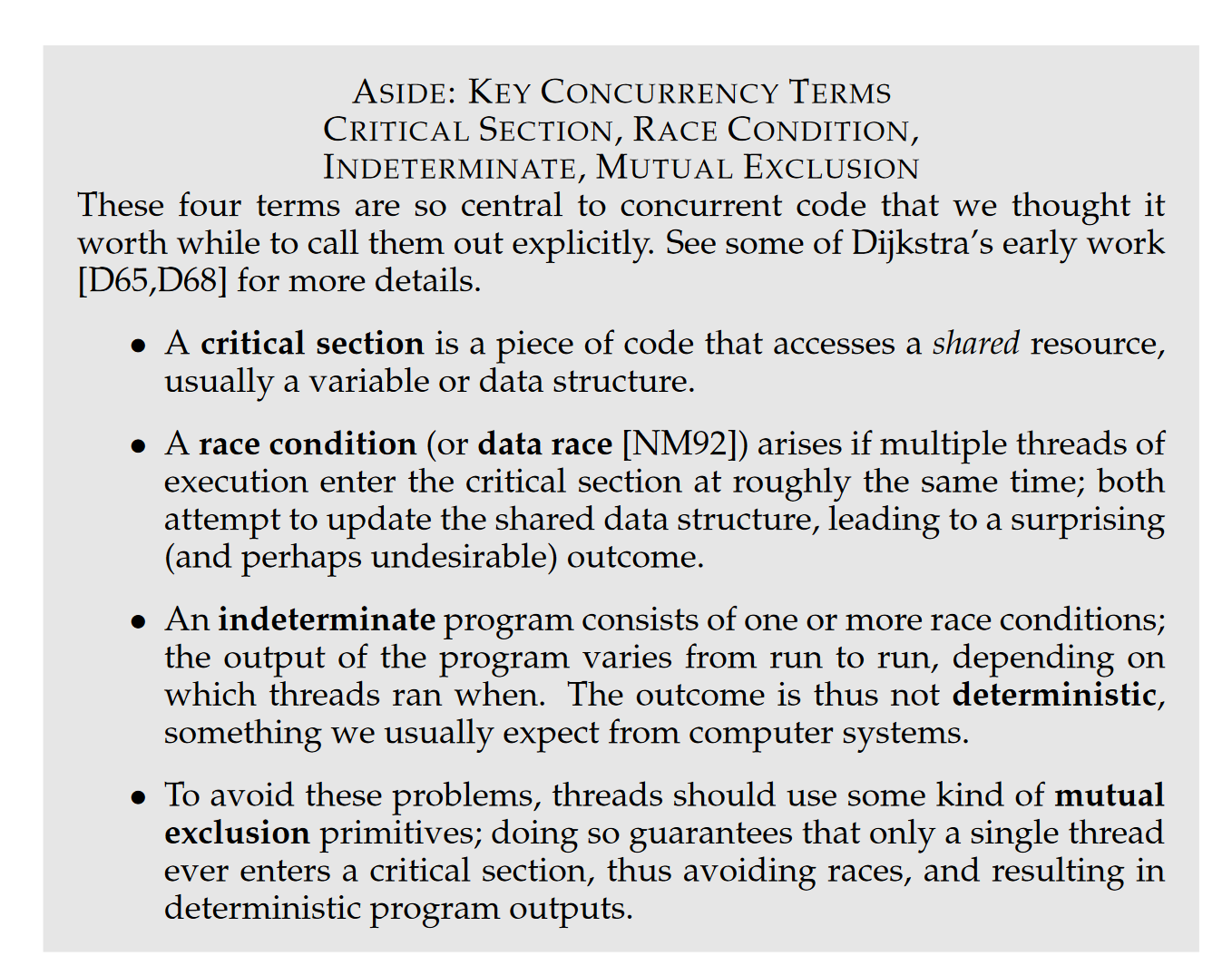

- Data race

- race condition

- we call this result indeterminate, where it is not known what the output will be and it is indeed likely to be different across runs

- We call this critical section

- mutual exclusion.

- This property guarantees that if one thread is executing within the critical section, the others will be prevented from doing so.

- Atomicity

- atomic is simply expressed with the phrase “all or nothing”;

- Transaction: grouping the single atomic action

- Assume this instruction adds a value to a memory location, and the hardware guarantees that it executes atomically

- Synchronization primitives

- HW support and OS support

- build multi-thread code that accesses critical sections in a synchronization and controlled manner

- Waiting for another: one thread must wait for another to complete some action before it continues

- sleeping/waking interaction

- condition variables